It is undeniable that Artificial Intelligence (AI) has been a critical factor in the development of many industries. However, little is said about the environmental consequences of its use.

What are carbon emissions?

First, it must be understood that carbon dioxide (CO2), molecularly composed of oxygen and carbon, is a gas naturally found on various surfaces of the Earth, such as the atmosphere, the biosphere, the hydrosphere, and the lithosphere (its existence in these makes up the carbon cycle). Its purpose is to trap infrared radiation and return it to space.

So, if this gas is in the atmosphere, how does it affect global warming? Simple: human activity (the burning of fossil fuels, deforestation, electricity generation, etc.) dramatically increases the concentration of carbon dioxide, which is more than the Earth can handle.

Because CO2 traps heat, the planet warms more. This causes the “greenhouse gases” (GHG) to wreak havoc, causing the “greenhouse effect.” Therefore, carbon emissions refer to the release of CO2 and high proportions of GHGs produced by various human industrial activities that cause global warming.

The carbon footprint

According to the climate-change mitigation and carbon footprint expert Sebastián Galbusera, in an interview conducted by National Geographic, “carbon footprint” refers to “the amount of greenhouse gas emissions that were emitted into the atmosphere through some human activity, which can be a product or a service, or by the daily action of an inhabitant.”

A personal carbon footprint is produced by a single individual carrying out their daily activities, such as using energy resources and cooking and processing food. Each individual’s average carbon footprint is 4 tons of CO2 per year. Ideally, it should be between 2 and 2.5 tons.

The impact of AI on the environment

It is essential to understand that global emissions are concentrated in the energy, transport, industry, construction, and agriculture sectors. In the technology sector, the use of AI depends on electrical power. Specifically, the electricity and heat production industry has experienced a significant increase of 46% in global emissions.

There is growing concern about the increase in electricity-generated emissions; how does this contribute to CO2 emissions? To answer this question, we must first know how this process works, not at the user level but at the operational level.

What is a data center?

A data center is a physical space that assembles the necessary infrastructure to process, order, protect, and preserve information. It ensures the efficient functioning of various sectors in the digital society by enabling connectivity and online services.

A data center consists of servers (specialized computers with a lot of power), storage systems (high-capacity hard drives), networks (which allow connectivity and communication), cooling systems (to maintain the right temperature and prevent equipment from overheating), and security (against unauthorized people or natural disasters).

There are diverse types of data centers: corporate, housing (colocation), hosting (managed), and cloud (cloud storage). These spaces can be classified according to their infrastructure: hyperscale, modular, and underground. Examples of well-known data centers include Google Data Center, Amazon Web Services, and Microsoft Azure.

Notably, the above data centers are hyperscale, meaning they are the largest and most powerful in the world. To be classified thus, they must have at least the following characteristics: 5000 servers, 930 square meters (min.) in size, and deliver at least 40 MW (megawatt)* of capacity.

*1 megawatt is equivalent to one million watts or one thousand kilowatts.

To understand the scope of these spaces, consider that in 2020 alone, more than half of all data processed globally went through at least one hyperscale facility.

Data Centers, AI, and Power Consumption

A query with ChatGPT uses ten times more electricity than a Google search (2.9 Wh vs. 0.03 Wh*). According to the Goldman Sachs Research report Generational Growth: AI, Data Centers and the Coming US Power Demand Surge, this trend in AI use and data centers will significantly increase energy demand. The report forecasts a 15% increase in AI energy demand between 2023 and 2030, estimating that 47 GW (gigawatt)** of capacity will be required to meet this industry’s growth demand.

*Wh equals watt-hours.

**1 gigawatt is equivalent to one billion watts.

The International Energy Agency (IEA) states that electricity demand will increase by 5% by 2025, or 80 TWh* of annual energy consumption. Likewise, the IEA established that data center electrical consumption is between 1 and 1.3% of total power and is expected to rise to 4% by the end of the decade.

*TWh refers to terawatt hours

Google’s report states that, according to IEA estimates, the electricity consumed by its data centers (exceeding 24 TWh in 2023) accounts for 7-10% of global data center electricity consumption and 0.1% of global electricity demand.

The energy and economic costs of AI

One reason for the increase in these energy demands is due to an increase in the parameter scaling of large language models (LLMs) and multimodal models (LMMs) (i.e., the configurations that the models learn during their training, which are adjusted to modify how they process information, generate responses, and adapt their behaviors) and the training processes, inference, and maintenance of AI models.

This increase in the scale of the model parameters is exemplified by Google BERT-large (350 million parameters), Open AI GPT2-XL (1.5 billion), NVIDIA Megatron-LM (8 billion), Google T5-11B (11 billion), and Open AI GPT-3 (175 billion).

Similarly, the data models’ training hours translate into higher electricity and economic expenditures (Table 3). OpenGPT-2-XL was trained on 40 billion words, and RoBERTa (Meta/Facebook AI) was trained on 160 GB of text (the same amount as OpenGPT-2XL). As a result, both required approximately 25,000 GPU hours* to train.

*GPU: Graphics processing unit, which indicates the speed of the graphics cards. One hour of GPU is equivalent to 20 hours of CPU (computer).

Tech companies and their environmental impact

According to studies, the creation of GPT-3 emitted 552 tons of CO2, producing 8.4 tons of CO2 annually.

Google’s most recent Environmental Report 2024 states that its GHG emissions increased by 13% in 2023 due to two crucial factors: (1) an increase in electricity consumption by its data centers and (2) emissions from its distribution and production chain (supply chain). More specifically, Google’s data centers alone increased their electricity consumption by 17% in 2023 despite having renewable energy programs.

Microsoft’s 2024 Environmental Sustainability Report mentions an overall increase in its emissions by 29.1%. However, this company has a significant water footprint since 700,000 liters of fresh water were used to train GPT-3 in its data centers, equivalent to producing 370 cars. For perspective, a simple personal consult (20 to 50 questions) in ChatGPT consumes 500 ml of water.

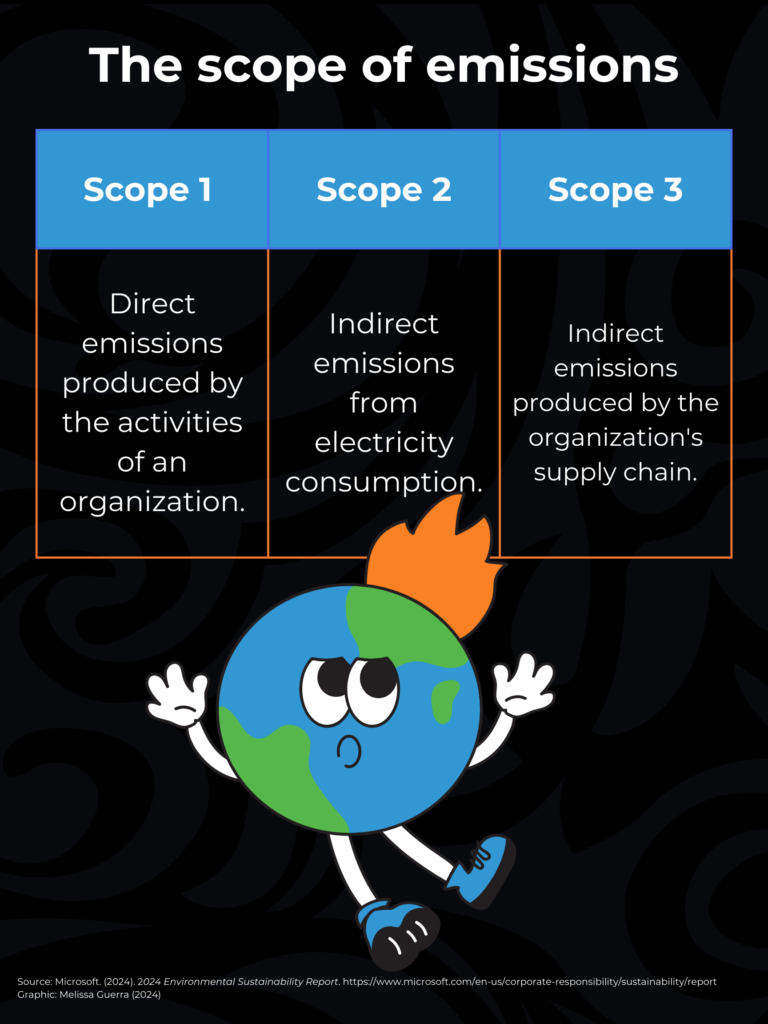

The Scope of Emissions

To understand the management of information in these reports, it is essential to comprehend several concepts:

- Operational carbon: emissions associated with the energy required for a building or infrastructure to function (ventilation, lighting, etc.).

- Embodied carbon: Associated with any process, material, or product used to construct, maintain, repair, renovate, or demolish a building/infrastructure.

On the other hand, to measure the carbon footprint, a procedure called GHG protocol divides gas emissions into three categories:

What is being done to combat the carbon footprint?

Currently, modern models signal a trend of code-red AI due to the higher computational cost of the research focusing on improving systems’ accuracy through AI. This includes increasing the training data and the number of experiments in the models. This technology is costly (in economic and energy terms) to train or execute because it focuses on accuracy rather than efficiency.

Contrarily, green AI considers computational costs and promotes efficiency (cost reduction) to achieve favorable performance results. Green AI incorporates efficiency measures to reduce economic and ecological costs. These measures consider carbon emissions, electrical infrastructure, and the cost of the FPO (floating point operations) to quantify the carbon footprint and the number of parameters used per model.

Strategies

Aware of the environmental impact, Google and Microsoft have established measures towards sustainability with AI. These include:

- Integrate carbon-free energy (CFE). For example, Google plans to buy 4GW of clean energy.

- Achieve net-zero emissions by 2030, both for operations and the value chain.

- Develop AI responsibly, addressing the environmental footprint through model optimization, efficient infrastructure, and emissions reductions.

- Positive water strategies (sustainable water management and restoration). For example, Google’s climate-conscious cooling to identify cooling solutions.

- Zero-waste strategies or Zero waste (reduction and reuse) in data centers and infrastructure.

It is not all bad news: some studies indicate that AI has the potential to mitigate global GHG emissions by 5 to 10% by 2030. Likewise, today, various AIs help identify the carbon footprint of models and systems, analyzing, optimizing, and building strategies that help reduce greenhouse gas emissions.

Although there is a long way to go, all must be aware that making changes to help the planet is everyone’s responsibility. Although AI has increased energy consumption, we must not forget that this negative environmental impact is caused by fossil fuels, aviation, agriculture, construction, and transport industries—in other words, by all human activity.

Translated by Daniel Wetta

This article from Observatory of the Institute for the Future of Education may be shared under the terms of the license CC BY-NC-SA 4.0

)

)