If you remember, a few years ago, during the COVID-19 pandemic, the word “metaverse” was fashionable as something new that would revolutionize education and allow us remote access through interactive and dynamic virtual environments. The digital commitment to this space was so significant that Facebook —now renamed Meta— invested over 40 billion dollars in the technology. Needless to say, the expectation and potential of this initiative have not yet materialized, and numerous institutions have abandoned or put the metaverse on the back burner to direct their attention to the hot new trend, artificial intelligence (AI).

Therefore, thinking about the first part of this article published in June 2023, and playing devil’s advocate, I invite readers, in the words of the consulting firm Gartner, to stroll through the Valley of Disillusionment and consider: What if the solution to our educational challenges is not artificial intelligence?

Questioning skeptically and thoughtfully may not put us in the front ranks of the trend. Still, it is a responsible way of doubting the expected (and unexpected) possible impacts of AI, strengthening our creative thinking by reflecting on “What is it that we cannot (or do not want to) fathom about the proposals for AI development and what else should we realize?” Skepticism is not new; it has been employed since ancient times and contemporaneously in fields such as disaster risk reduction and cybersecurity. Therefore, it is valuable to remember that with the creation of each new technology, e.g., nuclear energy, AI imaging, or AI audio, undesired uses of these tools also arise, which is why we must subscribe to global regulatory bodies to avoid their improper use.

Paraphrasing the words of Richard Aldrich, an education historian, “We are entering an era when a single person can, by one clandestine act, cause millions of deaths or render a city uninhabitable for years.” (Aldrich, 2010; p. 2; Rees, 2003, cited by Aldrich, 2010). Pondering this frightening scenario, what are some possible risks that education faces from using artificial intelligence?

To consider this, I suggest examining the perspectives of two questions:

- Who designs artificial intelligence for education?

- Who owns the education data?

Who designs artificial intelligence for education?

Discussions about education usually assume implicit trust in an authority, be it a teacher or an institution and its services (such as a platform or AI). Although conversational AI interactions do not allow us to interact with the AI creators, the scope and limitations of their designs communicate what they seek us to achieve and not achieve. For the most part, we may find a consensus based on human rights, whose foundations can be shared by multiple countries, but when considering individual people, what are our expectations of AI to help us grow?

The ability of AI to influence a child’s development, for example, is one of the concerns that have been expressed, given possible detrimental effects, which, according to UNICEF, is a topic that has not been deeply explored. The use of digital devices such as televisions, smartphones, and tablets, according to the Pew Research Center (2020), has been reported by more than 50% of parents of children between 3 and 4 years old, many of them having continuous unsupervised interactions with voice-activated smart assistants, such as Alexa and Apple’s Siri, encouraging their psychosocial development and perception of the world in ways that require further attention and research. Although it may not have been documented, a few weeks ago, I heard a story about an elementary school teacher who said some children failed to call her “mom” and instead called her “Alexa.” Will this affect attachment theories?

Similarly, what role do we expect AI to play regarding lifelong learning? How vital should our previous experiences be in our future project? Specifically, emphasizing our prior interests in a recommendation system can shape or even reinforce our preferences, as an article published in the MIT Sloan Management Review (MIT SMR) reports. Thus, the best interests of the user could be delimited by the educational philosophies and interests of the systems’ providers, academic institutions, or government, running the risk of excluding learning paths in areas such as sports, arts, and humanities in favor of others associated with the STEM fields (Science, Technology, Engineering, and Mathematics).

Who owns the education data?

While machines require energy resources such as electricity to perform their jobs, artificial intelligence requires another particular supply: data.

The collection of educational data, notably, is not proportional across all institutions. The information gathered from interactions in educational communities can vary drastically due to differences in enrollment, curricula, academic policies, and data collection. Also, quantity does not translate into quality. Another challenge is the differing data curation policies and the quality of the training of AI systems in the various institutions.

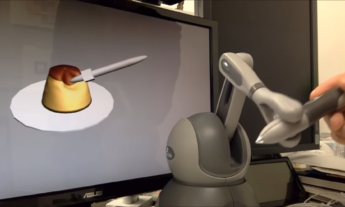

The possession of educational data due to the use of artificial intelligence encounters an additional dilemma because multiple initiatives involve visual computing for safety reasons or assessment of the classroom experience, but these violate the privacy of students, teachers, and others. Ultimately, it is up to them to decide what to do with their personal data, leading institutions to be much more transparent about how their data is obtained, stored, used, and deleted, as is the case with the ViziBlue project at the University of Michigan.

Conclusions

What’s next after artificial intelligence?

A constant that led me to generate this article is the idea of defending the human role of being a teacher. In 2023, the report An Ed-tech Tragedy? Educational Technologies and School Closures in the Time of COVID-19 highlighted multiple valuable conclusions, such as the importance of not providing false educational promises with unvalidated educational methodologies (p. 424), the priority of generating codes, regulations, and legislation that put students first (p. 453), and the desire to remember that education should be oriented towards achieving student-focused objectives and not simply react to technological changes, considering that technology is a means, not an end.

The findings of this report make it understandable that the integration of artificial intelligence is not yet appropriate for different levels of educational institutions that are just beginning to comprehend their data and do not have the necessary technological maturity to evaluate the benefits and risks of providing it. This includes sensitive data on the identity and activities of students in models that have not been validated and whose decisions cannot be part of explainable artificial intelligence (xAI).

In closing, I must admit that I am confident that artificial intelligence has the potential to be of great support in education. As Bloom (1984) imagined several decades ago, providing every student with a personalized tutor considerably increases their performance in acquiring knowledge and skills. This possibility could be within the reach of many students in the future. However, as we pointed out in our chapter about the possibilities of artificial intelligence for mental health, it is essential to remember that the emergence of AI tutors should mean support for human teachers and not a replacement of them by those who cannot pay them.

Gerardo Castañeda Garza holds a Ph.D. in Educational Innovation from Tecnologico de Monterrey.

Currently, he serves as Data Acquisition Coordinator at the Living Lab & Data Hub of the Institute for the Future of Education atTecnologico de Monterrey. He has collaborated on interdisciplinary projects internationally in areas such as education, sustainability, and disaster risk reduction. As a professor of digital humanities, he seeks to promote ethical and responsible thinking about the possibilities of the future in our relationship with technology, rethinking the purposes of life in the face of new scenarios.

He volunteers in the international network of researchers TRANSFORM to promote community resilience to the effects of climate change. He enjoys green tea and Indian food.

This article from Observatory of the Institute for the Future of Education may be shared under the terms of the license CC BY-NC-SA 4.0

)

)