A digital tool, M-Write, created by the University of Michigan will use machine learning to score students’ writing submissions.

After being awarded a grant of $1.8 million grant in 2015, the M-Write team will release an enhanced version in the fall of 2017 with the goal of reaching 10,000 students by 2021.

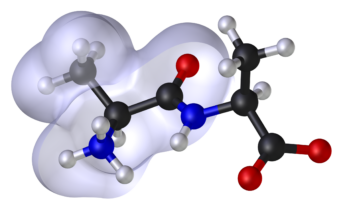

To score the tests, the platform combines conceptual writing prompts, automated peer review with rubrics, natural language processing, and personalized feedback.

“This will enable the implementation of content-focused writing activities to better engage students from diverse disciplines in the subject matter and increase their comprehension of key concepts,” stated the developers.

The aim is to address a difficulty with writing exams: professors lack the time to grade hundreds of essays, which is one of the reasons behind the popularity of multiple-choice tests. However, this kind of assessments cannot grade accurately writing skills.

Software developers are creating course-specific algorithms that will be able to identify struggling students. Statistics 250 will be the first course to use the automated function of the program.

This article from Observatory of the Institute for the Future of Education may be shared under the terms of the license CC BY-NC-SA 4.0

)

)