A previous article, Ethical Principles of Education with Artificial Intelligence (AI), reviewed some basic frameworks for AI ethical principles. In these principles, we find the term “trustworthy AI,” which indicates that AI systems must be trustworthy; however, there is much debate concerning whether these tools are reliable.

Recently, experiments have emerged in which AI was subjected to extreme scenarios in controlled environments. In these instances, AI systems tried to download themselves and blackmailed, lied, and threatened their creators. Examples are Anthropic‘s Claude 4, where the system blackmailed an engineer to avoid being shut down, while OpenAI‘s o1 model tried to download itself to external servers, lying so as not to be discovered.

Thus, concerns have grown that these systems not only hallucinate or have errors but can also deceive or simulate an alignment (pretending to comply with and abide by the programmer’s instructions while pursuing other purposes).

The ethics of AI

Ethics is crucial in AI (although little or nothing is said about it) because artificial intelligence systems should govern their behavior according to human values, seeking optimal development and favorable uses that benefit rather than harm society.

For this reason, when we talk about ethical frameworks, we refer to the sets of rules that govern an AI system, encompassing values, principles, and techniques that guide moral conduct in its development, implementation, use, and sale.

Changes in AI ethics through time

According to Hagendorff (2024), AI ethics have evolved to keep up with technological advances that conform to AI’s evolution from a rigorous and reactive discipline to a more proactive field. Now not only one must construct regulations to manage AI but also explain what it comprises and how AI ethical principles would be applied in reality, giving way to the creation of AI virtue ethics, which guide the implementation of virtuous qualities in AI systems (instead of just rules or consequences) and which are also applicable to the people who are in charge of their development, implementation, or use.

Subsequently, AI ethics has a more legal application through the creation of acts or laws to manage the ethical implications of these systems. Consequently, AI ethics has diversified to address technical and theoretical issues, considering two components:

- AI alignment: Indicating that AI behaves as expected, under human values and goals, while ensuring reliability, safety, and usefulness.

- AI security: ensuring that AI is designed and used for beneficial purposes with minimal risks or damages.

To understand this concept better, let’s review the ethical framework supporting trust in AI systems.

Trustworthy AI

Trustworthy AI(TAI) is a product of an ethical AI framework that promotes trust in AI systems. According to the European Commission’s report Ethics Guidelines for Trustworthy AI, this concept has three components. AI must be:

- Legal: It must tie to laws and regulations.

- Ethics: AI’s adherence to ethical principles and values must be ensured.

- Robust: From a technical perspective, AI must function correctly even in adverse conditions; it must be stable, maintain performance, and be social (i.e., it considers human contexts to minimize unintentional damages).

In addition, the European Commission states that trustworthy AI is key to facilitating responsible competition, since this framework of reference (trustworthy AI) comprises a responsible foundation allowing people to trust that the design, development, and use of AI are legal, ethical, and robust; it fosters sustainable innovation that benefits and protects human flourishing and the common good of society.

Additionally, trustworthy AI is based on four ethical principles and seven key requirements, as shown in the following graph:

The four ethical principles are respect for human autonomy, damage prevention, fairness, and explainability. The seven mandatory key requirements to ensure the successful development, implementation, and use of AI systems are human agency and oversight, technical robustness and security, data privacy and governance, transparency, diversity, non-discrimination and fairness, environmental and social well-being, and accountability.

Distrust, ethics washing, and risk factors

To discuss this dilemma, it is necessary to consider what happened in the first episode of the OpenAI podcast, where the CEO and co-founder of the same company, Sam Altman, jumped into the discussion about trusting these systems:

“People have a very high degree of trust in ChatGPT, which is interesting because AI hallucinates. It should be the technology that is not trusted much... People are having very private conversations with Chat GPT. ChatGPT can become a source of sensitive information, so I think a framework that reflects this reality is needed”, Altman declares.

The authors Stamboliev and Christiaens (2025) have also mentioned that ethical principles underlying the regulation of AI are formulated as non-binding statements, i.e., contracts that do not generate legal obligations, or when initial negotiations are held, both parties express an intention to collaborate. Still, they are not legally obliged to comply with them. According to these authors, this is linked to ethics washing, where the AI industry acquires ethical certifications at its convenience, without gaining such approbation through responsible business practices.

Sectors like medicine, finance, and education also have specific disciplinary considerations for AI trustworthiness. For example, in medicine, studies show a considerable distrust in AI-based diagnostic systems, as people prefer the holistic perspective of a human doctor rather than an app. Another notable example of the lack of trust in these systems occurs in the finance sector, where many investors prefer human prediction to an algorithm, a phenomenon known as algorithmic aversion, as they value human decisions more (even though the algorithm has proven to be more accurate and with fewer errors).

Another recent case that has generated distrust in the use of AI related to security and privacy is the incident involving US Secretary of State Marco Rubio, where an imposter used AI to impersonate him (using voice imitation and a similar writing style) to acquire sensitive information.

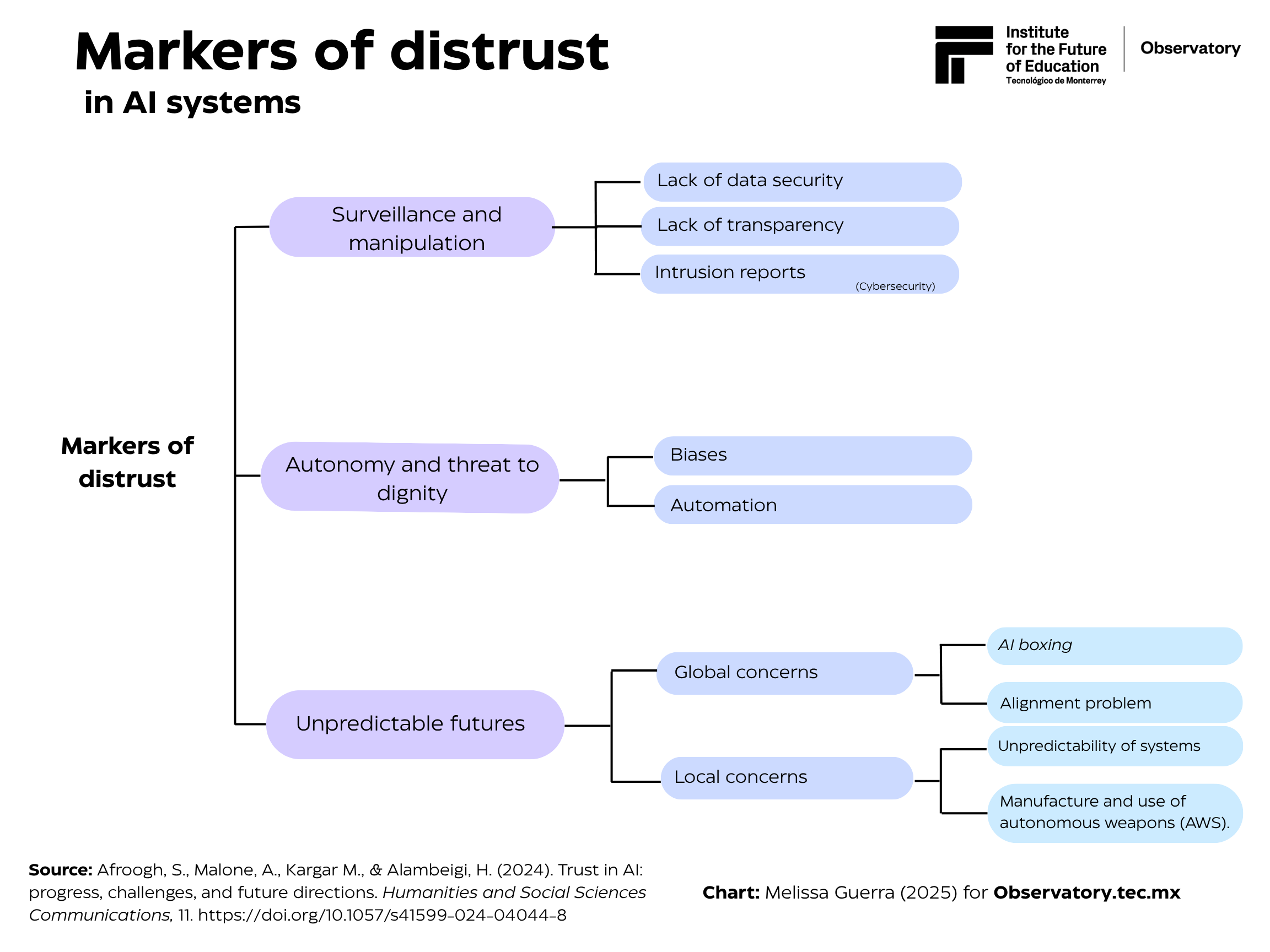

Many factors contribute to the degree of distrust in AI systems. Afroogh et al. (2024), in their article Trust in AI: Progress, Challenges, and Future Directions, contemplate the following:

It is necessary to emphasize that these are not the only concerns of experts, as there is also a growing discomfort about Artificial General Intelligence (AGI). AGI can possess broad and powerful plastic intelligence, not just focused on specific tasks (like generative AI). These concerns stem partly from the fact that AGI can process information faster than humans, having access to all the knowledge available on the internet, thus surpassing human creators. Another worry is the possible alignment problem of these systems, i.e., that the interests and values of an AGI might not align with those of humanity (such as the examples of Claude 4 and OpenAI’s o1 model). For this reason, strategies such as AI boxing (AI containment) are put on the table to contain advanced AIs or superintelligences and reduce their possible dangers, including risky decisions, escaping a restricted environment, or manipulating or deceiving humans.

Reliance on AI in education

Along these lines, the most relevant ethical concerns surrounding distrust in using AI in education concern biases, academic integrity, and the potential impact of AI on research in education and teaching practice. A specific lack of trust in these systems is due to AI hallucinations, as generative AI has been recently used in education to create academic content (articles, lesson plans, presentations, courses, etc.). Therefore, caution should be exercised, and the information should be verified to ensure accuracy and reliability.

Also, studies have revealed that parents are concerned that AI systems cause students to become overly dependent on technology, reducing their ability to think critically and independently. These studies align with the most recent MIT research on cognitive debt in people’s brains (less neural activity and a less active brain) caused by using LLMs (such as Chat GPT).

So, should AI be trusted or not?

AI promises a better life; however, this often means sharing sensitive information and losing cognitive ability to save time, automate processes, and avoid tedious tasks. To some extent, it is normal to be suspicious of AI systems when there is a lack of understanding of who controls them and how, since, in most cases, compliance with ethical regulations falls on the creators and developers of the AI systems, not the end user.

However, the end user is also responsible for understanding the product they are using and the potential repercussions (depending on its use), although not to the same extent or on the same scale as the titans of the AI industry. For this reason, the rules and regulations governing AI systems are essential, ensuring that their development and implementation adhere as closely as possible to ethical, fair, responsible, and benevolent use, thereby promoting innovation.

Indeed, there are many concerns about the future of AI systems. Whether or not we want to trust these systems will depend on what we want to lose or gain in the process.

Translation bye Daniel Wetta

This article from Observatory of the Institute for the Future of Education may be shared under the terms of the license CC BY-NC-SA 4.0

)

)