Since the Generative AI (GenAI) boom began, not so long ago, people have used it for various tasks that, little by little, have become increasingly diversified. Its “Wow” factor? The culture of convenience.

It is essential to recognize that the culture of convenience stems from the desire for comfort and instant gratification. Thanks to the globalized, materialistic world, this has fostered the pursuit of simplifying life. This mentality of minimum effort has spread, reinforced by generative AI (although it is not the only factor).

However, such a lifestyle has imminent risks to physical and mental health, the economy, and the environment, as well as personal aspects such as loneliness, superficial interpersonal relationships, loss of meaning, and satisfaction.

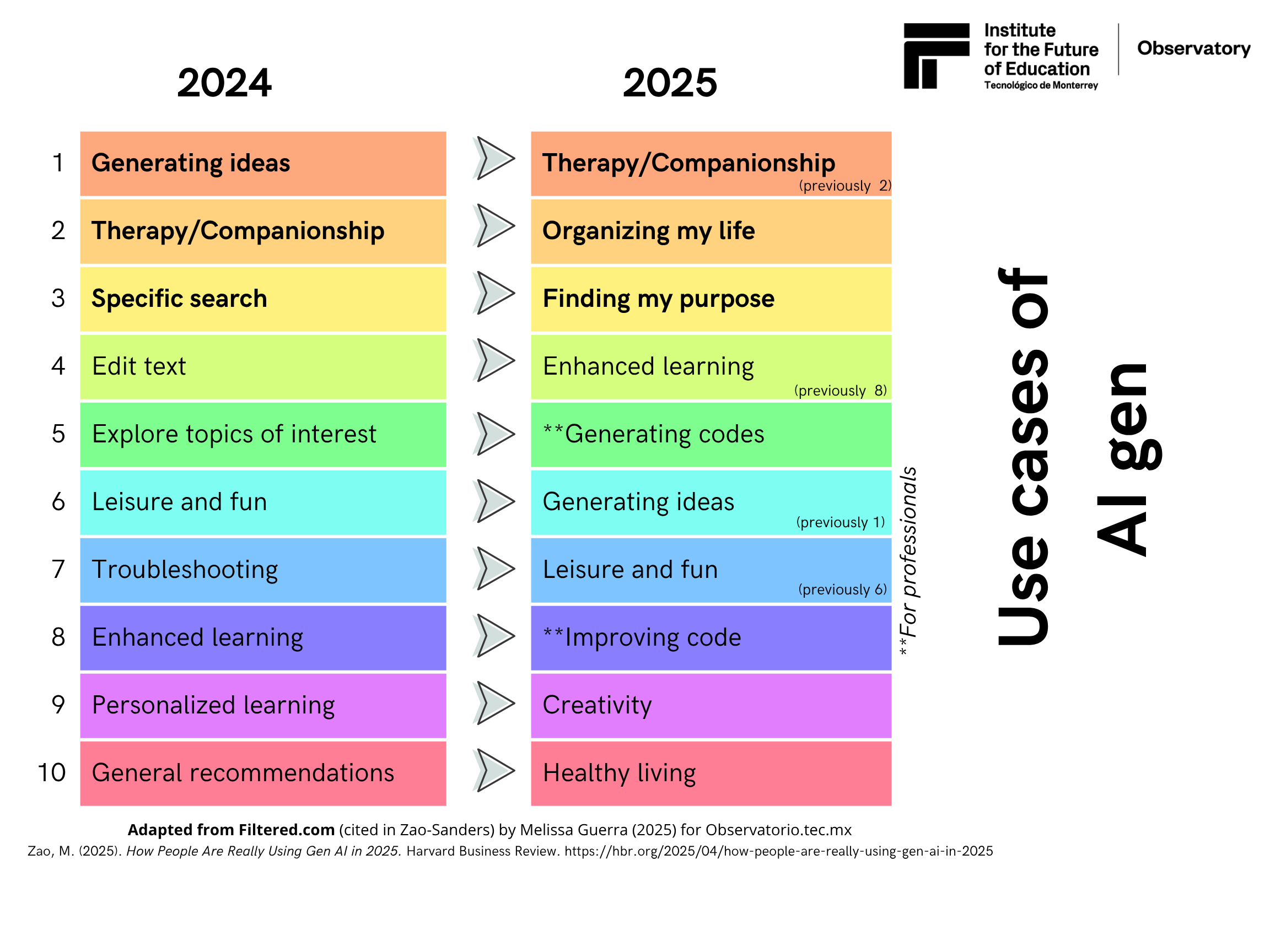

So, what is the relationship between the culture of convenience and generative AI? According to Marc Zao-Sander’s article How People Are Really Using Gen AI in 2025 published in the Harvard Business Review, the uses of GenAI have fluctuated over time, highlighting particular trends that correlate to some of the adverse effects of the convenience culture on society: mental health problems, lack of connection in a connected world, superficial relationships, and feelings of lack of purpose or meaning in life.

The graphic above illustrates that in 2024, the use of generative AI was generalized, encompassing applications such as ideation, text editing, recommendations, problem-solving, and exploring topics of interest, among others. However, 2025 marks a slight shift towards greater specificity, as AI has infiltrated many aspects of daily life, fulfilling societal needs. Notably, programming is on the rise because AI models are more powerful and better trained for specific tasks, such as code generation.

Below, the three primary uses of AI in 2025 are broken down to understand these generative AI usage trends and their correlation with the culture of convenience.

AI and its role in accompaniment

Studies suggest that human interaction is necessary to enjoy mental health and well-being because chronic loneliness can cause depression, sleep disorders, addictions, autoimmune diseases, an increased risk of cardiovascular diseases, and metabolic and neurological disorders, among others. Countries such as the United States, the United Kingdom, and Japan have declared loneliness a health pandemic, which, according to experts, has worse health consequences than obesity.

Why do people use AI to alleviate loneliness?

In a globalized world, the digitalization of human relationships is transforming human interactions, affecting social integration and participation, and in turn triggering adverse effects such as alienation and feelings of isolation. Thus, loneliness reflects changes in social recognition relationships, which are increasing in all demographic groups of society.

For example, people use chatbots or AI applications to engage more easily in emotional interactions, whether for friendship, romance, or therapy. For this reason, it is essential to understand what AI companions are. According to Freitas et al. (2024), these synthetic interactive partners offer emotional support through complex responses powered by natural language processing, machine learning, and cognitive computing that drive sophisticated interactions through a language that simulates empathy. (These systems cannot feel real emotions.) Examples of these platforms are Replika, Cleverbot, Chai, Woebot, and Kuki.

For Jacobs (2024), AI companions are cultural machines in the sense that they reflect and are part of cultural and social processes in dynamic, digital, and complex contexts, which has led to the singularization of the human subject, that is, to their individualization. Additionally, he explains that users give meaning to their relationships with AI.

So, why do users seek AI companions to alleviate loneliness? Freitas et al. (2024) proclaim the reasons to be:

- The absence of human interaction.

- The accessibility and availability of AI apps or platforms.

- The psychological need to “be heard.”

- The search for social recognition.

AI as a therapist

First, it is essential to understand that, in addition to conventional face-to-face interventions, internet-based interventions are also available in two forms: live or in real-time (using platforms such as Zoom), and asynchronous, i.e., those that utilize apps (with or without the guidance of a specialist). Often, both methods (face-to-face and internet) are used. Generative AI enters the dynamic as a tool to enhance internet-based interventions.

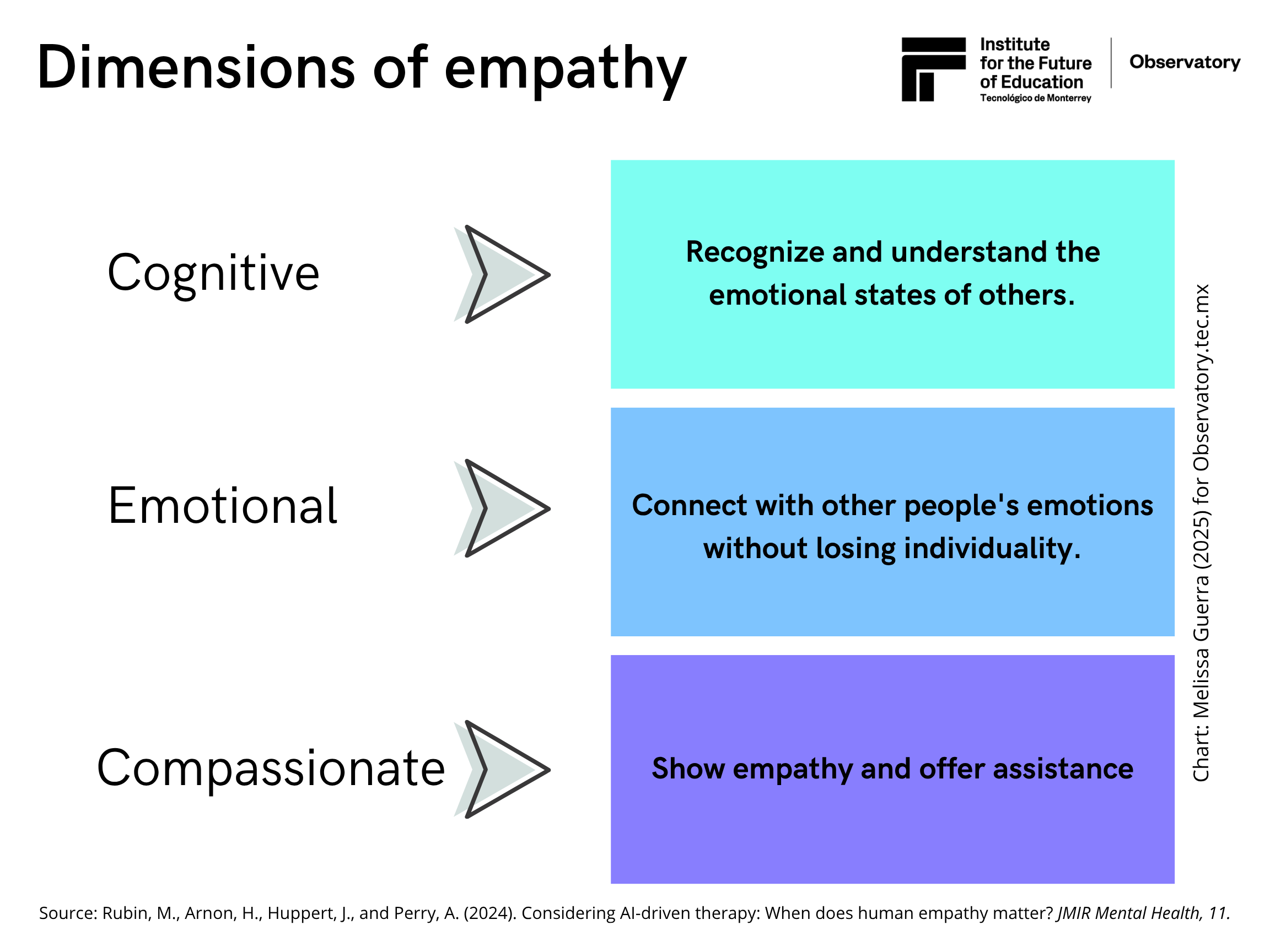

It is understood that the interventions are intended to enhance emotional, psychological, and social well-being, thereby helping individuals manage stress and make informed decisions. A key characteristic within this process is the role of empathy. To understand how generative AI is implemented within a therapeutic process with limitations, it is necessary to understand the three dimensions of empathy (see graphic below):

Although studies suggest that GPT-4 already possesses advanced knowledge of theory of mind, i.e., it is capable of understanding and using cognitive empathy, AI cannot recognize or utilize other types of empathy, as it can only imitate them through language that simulates it in users.

Why do people seek an AI therapist?

There are several reasons why people use artificial intelligence for therapy. Among these are the accessibility of these tools and their availability and 24/7 immediacy, which are desirable during an emotional crisis. Other primary benefits include anonymity and privacy; also, these tools provide a judgment-free environment. Moreover, users can feel in control of specific issues or situations. Added to this is the mental health stigma that still exists for certain people and contexts.

As can be seen, many of these reasons relate to convenience, meaning something easy and fast, without implying that AI tools are ineffective. Studies have shown that AI interventions can reduce symptoms of depression and anxiety, yielding positive experiences among people.

AI to organize my life

It’s very common nowadays to ask Siri for the best directions to work or school, ask Alexa to play our favorite songs while we work, tell Copilot to compose an email, or ask Notebook to summarize the PDF that the teacher assigned as homework.

Even if we don’t want it, AI integrations are here to stay, reinforcing our culture of convenience. In these cases, automated tasks, personalized assistance, and tracking habits all boil down to simplifying daily decisions, with the promise that AI will do everything for us, allowing us more time for essential things. However, we must be cautious about relying on these tools, as they can compromise higher-level skills.

In a post on social networks, I recently came across a clear example of this in education, where a teacher stated that he had integrated a class activity that stimulated imagination. As the teacher recounted, he was surprised that many of the children opened ChatGPT on their tablets instead of using their imagination and creativity to answer a reflection question and asked it precisely what the teacher had asked. This reflects a strong dependency on technology and a loss of critical skills for children. Therefore, it is essential to know how and when to use the tools to avoid AI dependency problems that are already due to technology.

AI as a tool to search for my life purpose

Remarkably, a sense of disconnection with oneself and those around us prevails in a connected world. According to Viktor Frankl in his work The Man in Search of Meaning, the search for the meaning of life is a primary force; it does not arise from instinctive impulses. Life’s meaning is unique and specific to each person, who must discover it themselves to satisfy their own will (in that search for meaning).

On the other hand, Costin and Fignoles (2021) argue that the feeling/meaning of life is a key aspect of human well-being. Therefore, beliefs, values, attitudes, identities, etc., are frameworks of meaning for seeing and interpreting the world and oneself (the person), providing a sense of coherence, purpose, and meaning.

In this sense, the search stems from the human need to find purpose and meaning in life, a particularly challenging task in the modern world.

Why do people search for the meaning of life through AI?

Similar to the previous answers, AI can dialogue or converse on various topics, so it is possible that it can suggest introspection and reflection exercises, depending on the prompts provided. It also provides quick answers, alluding to its accessibility and availability; sometimes, one cannot find people willing to listen. Additionally, AI can foster confidence in security and privacy while enabling the exploration of complex topics.

Ethical and safety implications of AI tools

Significantly, AI chatbots raise ethical concerns about the security and privacy of sensitive data. AI relies on training data and algorithms that may have design and implementation biases, which can lead to harmful advice. (AI may or may not understand specific cultural contexts.) Therefore, transparency and continuous evaluation of these tools are crucial to ensure their responsible use.

Another possible risk is AI hallucinations, which occur when the chatbot or tool perceives non-existent patterns or objects (for the human observer), generating a result that does not make sense or is inaccurate. Therefore, experts recommend using these tools in conjunction with professional advice and should not be considered a substitute for guidance from a healthcare professional. It is noted that regulating these tools in the healthcare sector is essential, as their use remains a gray area that is not widely discussed.

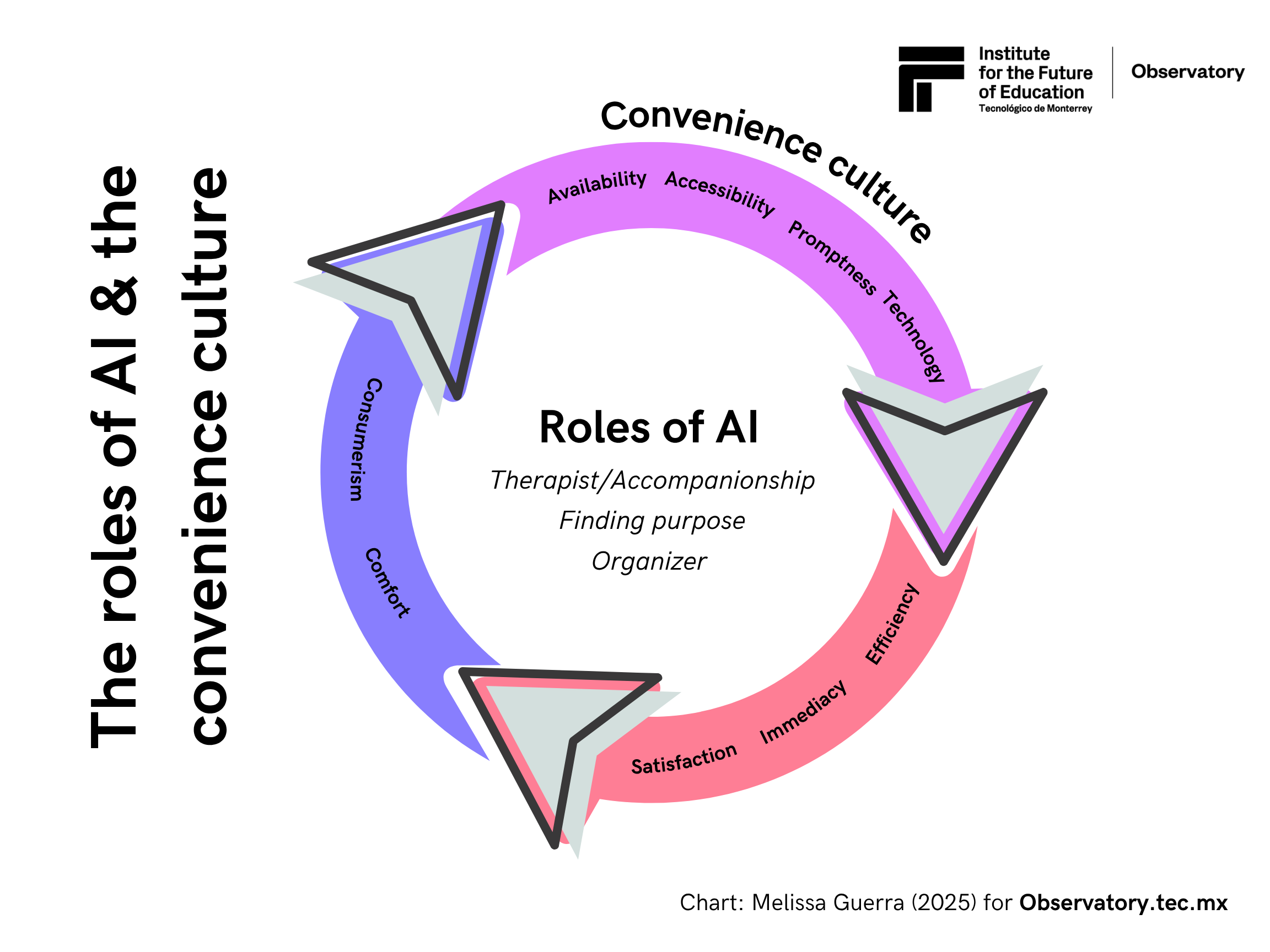

The Convenience Culture in the Age of AI

The article Why Leaders Must Choose Humanity Over Convenience In The AI Era mentions that “AI is a mirror of society, a reflection of what we give or feed it… therefore, there is a primary component of bias” (Hoque, 2025, as cited in Pontefract, 2025). Convenience has permeated culture over the years, with the arrival of artificial intelligence reinforcing this trend. (See graphic below.)

It has become popular to ask ChatGPT for diagnoses and treatment suggestions based on an elaborate prompt (assigning this AI the role of therapist or psychologist), or asking it to be our company. Now, it is easier than ever to go hours or days without human interaction, but using generative AI for these reasons should be employed with caution.

Although we cannot completely curb the culture of convenience or its reinforcement brought about by the convenience and efficiency of these tools, it is essential, as a first step, to recognize that many of our daily actions contribute to this culture. While generative AI is integrated into everyone’s operating system, we must be cautious with it, as in this capitalist world, everyone is competing to capture users’ attention, which can lead to unwanted adverse effects.

Translation by Daniel Wetta

This article from Observatory of the Institute for the Future of Education may be shared under the terms of the license CC BY-NC-SA 4.0

)

)