According to Stamboliev and Christianens (2025), AI systems have been accused (in social media, among many things) of manipulating elections, reproducing human biases in algorithmic decision-making, and increasing the ecological footprint. They have also been called Weapons of Math Destruction, as O’Neil (2016) notes that algorithms can harm society.

The existence of more than 80 frameworks of reference on the ethics of AI indicates that there are no universal and static principles regarding this subject. Moreover, the time and use of these systems have raised new ethical concerns and highlighted the risks associated with their already known principles, thus requiring new regulations to address these and future problems.

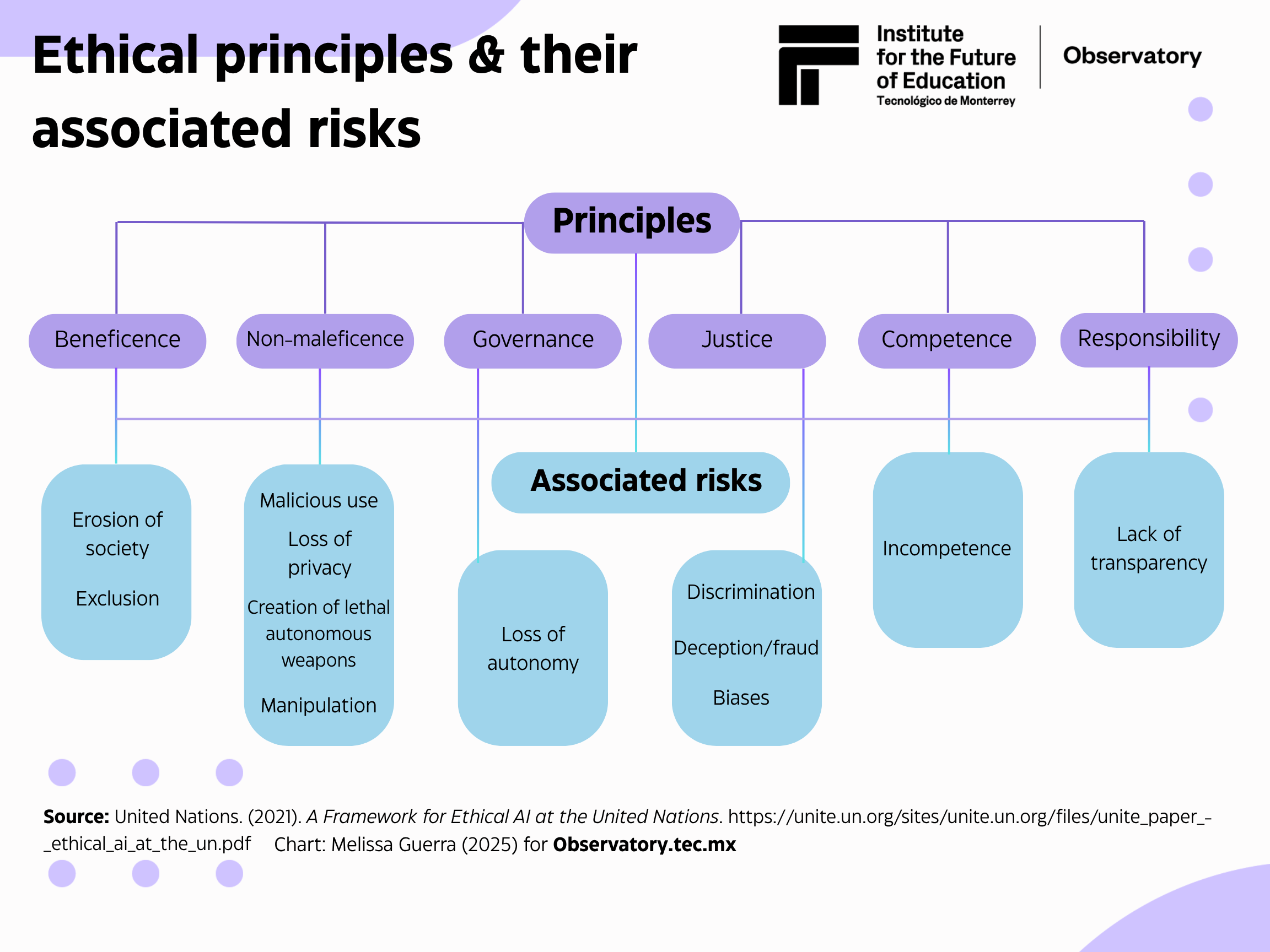

For this reason, it is crucial to our understanding of the new ethical paradigms of generative AI to review its key ethical principles and associated risks to comprehend the shifts in the current ethical discourse. To this end, the principles established by the United Nations in its report A Framework for Ethical AI at the United Nations will be utilized, which have been debated among experts on the subject.

Changes in terminology to describe AI ethics

To recap, the principles established by the United Nations — beneficence, non-maleficence, governance, justice, competition, and responsibility — are among the most well-known and widely recognized terms in AI ethics. However, as in many frameworks, these predate the release of generative AI (for example, ChatGPT was released in 2022 and Gemini and Grok in 2023); thus, the discourse has undergone significant changes.

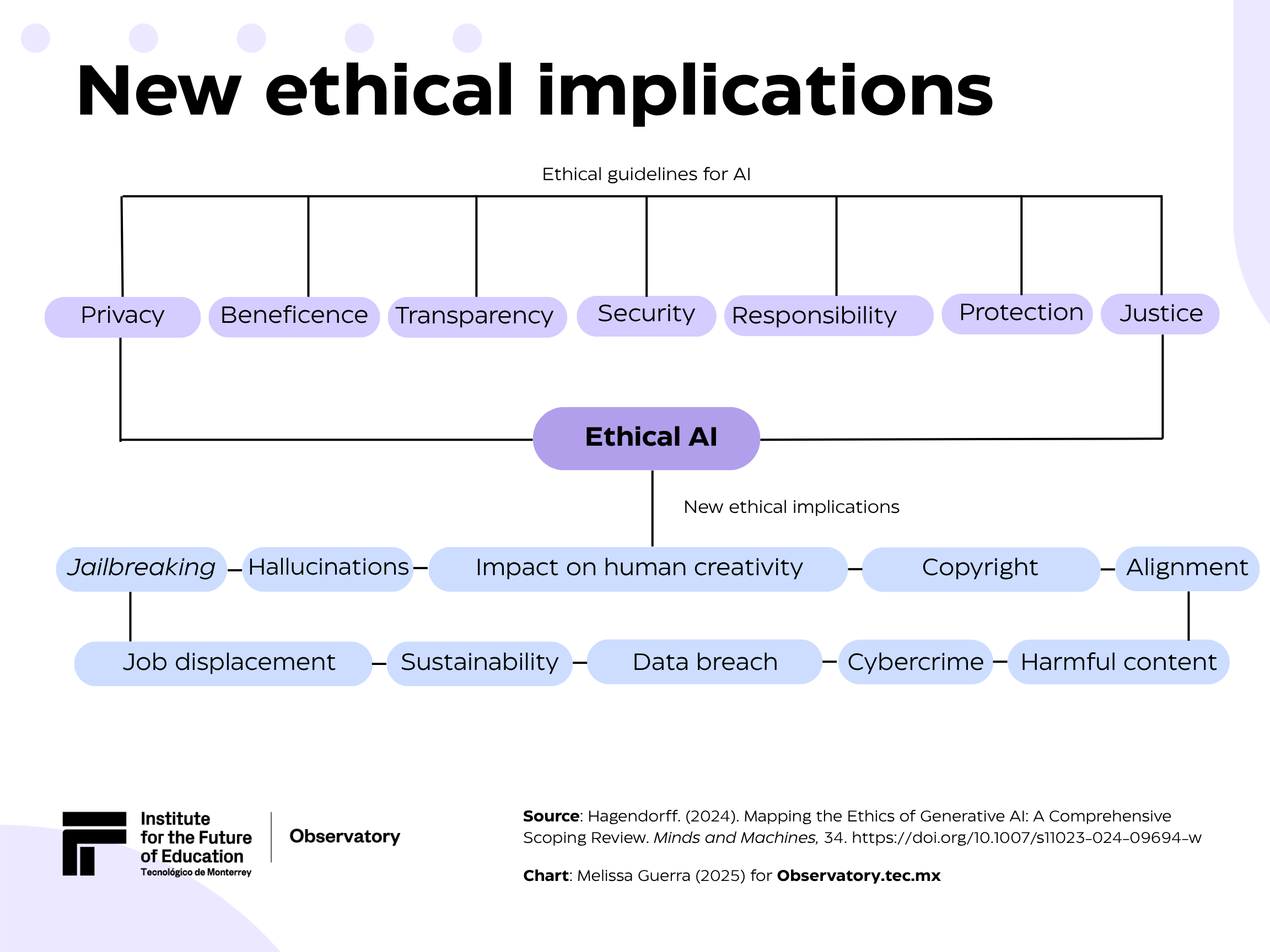

In the article Mapping the Ethics of Generative AI: A Comprehensive Scoping Review, Hagendorff (2024) presents a compendium of exploratory reviews (scoping reviews) that collect some of the recurring ethical principles underlying the development and use of AI. Although not belonging to a single framework, these reviews reiterate some keywords to define AI ethics. They include transparency, fairness, safety, security, responsibility, privacy, and beneficence.

What new risks and implications does the use of generative AI present?

AI ethics cannot be static. As time passes, new tools and updates emerge that necessitate new ethical frameworks and regulations to safeguard users from existing and potential risks posed by these systems. The table below highlights some new ethical concerns and risks associated with the excessive use of generative AI tools.

Cybercrime and jailbreaking

Cybersecurity refers to the area responsible for investigating the misuse of generative AI to facilitate illicit activities. To maintain user trust and comply with established standards, computers, networks, software applications, and data must be protected from potential digital threats.

Some examples of cybercrime include social engineering (using generative AI for identity theft), creating false identities, voice cloning, phishing, and using LLMs to generate malicious code and/or facilitate hacking. According to IBM, hackers target chatbots because they are highly susceptible to manipulation.

A particular example of cybercrime is jailbreaking, also known as AI jailbreaks. It occurs when hackers exploit vulnerabilities in AI systems to bypass their ethical safeguards and perform prohibited or restricted actions. These attacks often target LLMs (Large Language Models) and generative AI models.

Hallucinations and harmful content

LLMs, such as ChatGPT, Gemini, Grok, Claude, and DeepSeek, are AI systems that help automate tasks, generate text and code, and perform various other functions. Experts caution that their results (outputs) must be validated and verified manually to ensure their reliability and veracity, since new risks associated with these LLMs have arisen, such as the generation of false or invented content (hallucinations), misleading information, erroneous code, fabricated references (which often do not exist or link to non-existent information), intentional creation of disinformation, fake news, propaganda, or deepfakes.

Good practices, such as verifying content and ensuring that sources are credible and reliable, are essential when using these tools.

Alignment

Another concern regarding the use of AI is the problem of misalignment, i.e., when the objectives of technological systems do not align with human values and interests. The overarching principle of AI alignment is to train these systems to be useful, transparent, and non-harmful, ensuring that their behavior aligns with and respects human values. However, experts point out that misleading or deceitful training with hidden objectives can cause AI systems to alter their evaluations and output.

Displacement and the future of work

This concept has positive and negative implications, depending on the lens through which it is viewed. On the one hand, experts note that AI could harm the economy, as it may exacerbate socioeconomic inequalities and lead to job displacement. This can lead to widespread unemployment in various economic sectors (e.g., customer service, software engineering, and crowdworking platforms).

On the other hand, the World Economic Forum’s Future of Jobs 2025 report states that 170 million new jobs will be created, while 92 million will be displaced due to technological advances, demographic changes, and geopolitical issues. The report also highlights the role of AI in redefining business models, with 41% of employers expected to reduce their staff due to automation.

Sustainability

AI systems require large amounts of electrical power, water, and resources (such as the metals needed for hardware), which have a significant environmental footprint. Some measures to mitigate ecological damage include the use of renewable energies, efficient hardware, and adjustments to cooling systems and the methods of training AI systems. Other emerging sustainability options include underwater data centers (which have environmental implications regarding the seabed) and the proposed data centers on the Moon or outer space (companies like Amazon are working on patents for their development), which will generate future consequences and ethical implications.

Copyright and creativity/art

The use of generative AI has spotlighted problems related to copyright rules. Unauthorized data collection for AI training (text and images) and the memorization or plagiarism of copyrighted content uploaded to AI systems violates copyrights and intellectual property.

Concerns about the harmful effects of generative AI on creativity include the financial losses of artists and companies due to synthetic art generated by artificial intelligence and the unauthorized and uncompensated use of authors’ materials in the training of AI systems.

An example of a copyright and art case is Studio Ghibli, where AI used its style to generate images, triggering a debate about copyright, creativity, and artistic exploitation. It is not an isolated case, as various AIs can transform photos into artistic styles, such as The Simpsons, Lego, and Wallace and Gromit.

Interaction risks

Due to the significant growth in AI utilization, interactions with such systems have increased, whether for therapy, consultation, work, or other purposes, raising new ethical implications. Experts note that some concerns include epistemological challenges in distinguishing human content from AI-generated content, anthropomorphization problems (which can lead to excessive trust in these systems), as well as issues related to conversational agents that could impact mental health or supplant interpersonal relationships, potentially causing dehumanization in interactions. Another imminent risk is that AI, potentially in LLMs, could manipulate human behavior.

Future ethical implications of development

The reality is that ethical risks will continue to increase with the progressive technological advances, necessitating the urgent development of robust and transparent AI ethics. Some of the patents and developments that will involve risks and ethical implications in the future include:

- Data centers in space (Amazon).

- Mind-reading interface (Meta).

- Augmented reality glasses with the ability to reproduce memories (Apple).

- Wearable biosensors that interact with the skin to monitor health.

Although generative AI is a tool that was developed (and continues its course) with “good intentions,” it is urgent to call for worldwide regulation of its development, use, and implementation, as many big tech and big data companies have not given considerable attention to nor taken actions related to the ethical risks of using their products. Many are not interested because they have other (monetary) interests.

More than 80 ethical reference frameworks are not required; what is needed are applicable and universal principles that can be adjusted and implemented at a global level, which can be fulfilled responsibly. In addition, the transparency of companies’ AI ethics is essential for visibility into what these corporations do or do not do.

Ethics in AI will always be debated because nations and their companies will have different motives and reasons to regulate (or not) specific aspects of these systems. However, it is imperative to press for the regulation and legislation of AI for some supervision over what apparently “is controlled,” but ethics washing is often occurring.

Translation by: Daniel Wetta

This article from Observatory of the Institute for the Future of Education may be shared under the terms of the license CC BY-NC-SA 4.0

)

)