Since its early days, the Internet’s credibility has been scrutinized due to its nature, which allows anyone to participate, create, question, and edit. Just as it is possible to find valuable sources backed by reputable institutions, it is also possible to gather false information with no value. Moreover, we have been oversaturated with Internet information over the years, but now, that situation pales in comparison to what we currently encounter, primarily due to content created through generative artificial intelligence (generative AI).

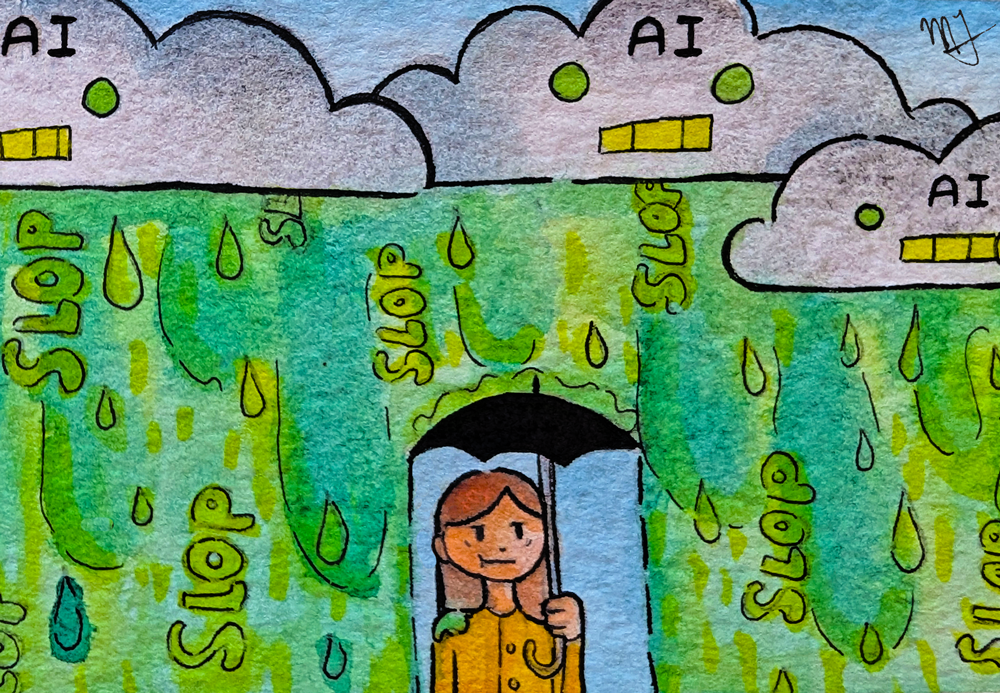

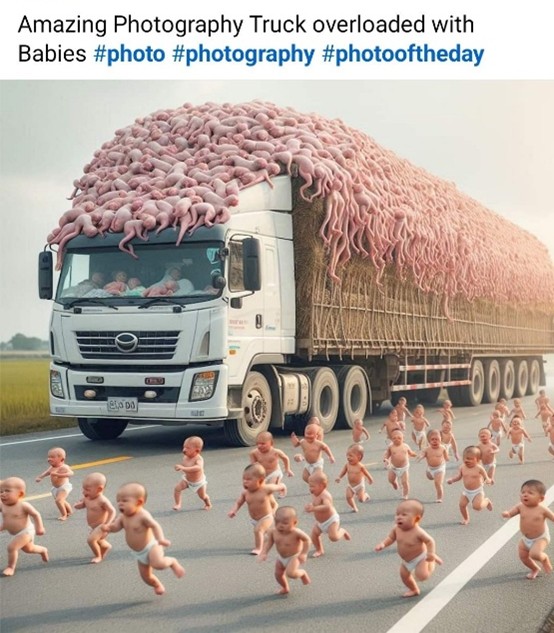

Despite the potential positive applications of artificial intelligence, we now face wave after wave of misinformation, bizarre videos, and meaningless memes appearing on social networks. From surreal images of Jesus Christ fused into a shrimp to cute bunnies hopping on a trampoline, these posts go viral because they seem either real or incredibly ridiculous. This trend is known as AI slop, referring to content produced by generative artificial intelligence tools that require minimal human intervention and, therefore, are of inferior quality and make no significant contribution.

Popular social networks like TikTok and YouTube are overflowing with videos created entirely with generative AI. A content creator claims to earn approximately $10,000 by generating videos in which he tasks himself with writing prompts in an AI application that can create up to 100 TikTok videos per hour. This can be done without worrying about collecting or disseminating truthful information, recording oneself, or using editing programs.

Notably, the data on which AI relies to generate this content is recycled from forums such as Reddit and X, alongside the work of content creators who strive to craft their own videos, podcasts, art, and so on. Many of these authors have felt tremendous frustration and helplessness because of the AI-generated videos, because competing with mass-produced audiovisual products can be tough when a video made by people can take weeks or months, and sadly, their works can get lost in the torrent of mediocrity, not garnering the attention that their projects truly deserve.

A 2023 NATO StratCom Center of Excellence report found that for as little as € 8 (approximately $9 US), it is possible to purchase thousands of AI-generated fake views, likes, or comments on most popular social networks. This, combined with the AI-generated content lacking human input, is creating a bizarre digital universe in which AI bots have prominence over humans. Sadly, the spaces designed for human interaction and sharing, regardless of their geographical location, are gradually being displaced by automated entities tasked with generating clicks and views. It is no surprise that the viral “Dead Internet” theory is becoming a reality day by day due to the fake interactions manufactured by generative AI.

A clear example of the above is Facebook, a popular social network that, a few years ago, was characterized as a platform for people to share their thoughts and photographs. The user’s activity could also be recorded in the various mini-games offered. Now, this network has become some kind of Frankenstein, featuring images of unrealistic people in “vulnerable” situations, out-of-this-world landscapes, impossible architecture, non-existent art, as well as absurd news and videos that aim to evoke surprise, laughter, tenderness, indignation, or even anger.

Images collected from @FacebookAISlop, on X.

Unfortunately, because much of the Facebook content has themes that evoke strong emotions, many people ( especially older adults) feel moved and interact with this material, falling into the trap of these “content creators” who, rather than disseminating valuable information, seek their personal benefit.

Consider the example below: the left image is a post claiming to show a baby peacock, where the description seeks to evoke tenderness (“Baby peacock, your ‘awwwww’ moment of the day”). However, this image could not be further from reality; the photo on the right shows the real appearance of a peacock chick.

Right picture: Rob Croukamp, istock.com

While a baby peacock can be quite adorable, some people will exaggerate their appearance on the internet to generate more reactions and comments, regardless of the cost in misinformation.

This type of image is just the tip of the iceberg of the misinformation turmoil that we face every day, not only on social networks but on the Internet in general. Unfortunately, AI Slop is not limited to memes or funny videos. For example, this video shows a touching moment of a little girl playing with a tiger; obviously, in real life, this behavior would be highly irresponsible and dangerous. A post like this disseminates misconceptions about co-existing with wild animals, which can put those who believe this type of video is real at risk.

As another example, some YouTube channels upload content narrating historical events entirely generated by AI. Unfortunately, these images created using AI tools can be historically inaccurate, and their narratives frequently contain incorrect and fabricated facts. Besides significantly damaging the views and popularity of videos researched, recorded, and edited by real people, whether specialists or amateurs, AI-generated videos disseminate mass misinformation about matters that define us as humanity.

“[AI] threatens to literally rewrite history – or people’s understanding of it – with all of the biases imbued into AI by its training material, and, increasingly, by the willful manipulation of the companies that own these tools.” – Jason Koebler, 404 Media.

Another example of AI slop affecting people is the spread of false information about significant events such as natural disasters. Last year, the southeastern United States experienced two hurricanes that devastated areas of various cities in their wake; people turned to the Internet to seek more information or find shelter. Unfortunately, many alarmist and false articles, images, and publications about the storms generated by AI were derived from search engine results, resulting in misinformation that caused anxious people to waste their time.

AI slop manifests itself prominently on social networks, but it has also permeated other media. Already, some books are being published and sold in digital bookstores that are entirely created by generative artificial intelligence, and some AI technologies shorten or synthesize versions of existing content. Moreover, many of these are published under names similar to those of real authors, in an attempt to deceive and increase sales.

In the music world, some singers are encountering themselves with the release of albums that they never recorded in the first place, featuring songs with their same voice and even titles and lyrics that are very similar to their own styles. Thousands of artists, albums, and songs are uploaded to these popular streaming platforms without human intervention. Spotify has revealed that it has deleted 75 million songs from its application; these were removed during the approval process, identified as AI-generated content by the system’s users.

It does not help that large companies also promote the use of AI on their platforms. Studies conducted by Stanford and Georgetown Universities in 2024 revealed that Facebook‘s recommendation algorithms were amplifying the appearance of AI-generated posts on people’s feeds, enabling such content to reach the maximum number of people.

“To social media giants, content is content; the cheaper it is, the less human labour it involves, the better. The outcome is an Internet of robots, tickling human users into whatever feelings and passions keep them engaged.” – Nesrine Malik, TheGuardian.

Likewise, most digital sites and applications are increasingly incorporating elements of AI. Pinterest, for example, a site specializing in photographs and videos to inspire its users, now integrates images created with AI. Virtual stores also use AI to promote products that are not what they seem, such as this quartz cup that went viral. Users who ordered it instead received a product of poor quality that differed significantly from what was displayed in the images.

Additionally, applications with the sole intention of generating content have been emerging, such as OpenAI’s Sora 2, which is very similar to TikTok, except that all the videos found there originate from generative AI, where a prompt can create whatever the user requires. Besides the environmental costs generated by these applications, a social media platform full of fake videos add another brick to a surreal mural that grows taller and taller, overshadowing an increasingly larger part of our reality.

AI is worsening the already existing problem of misinformation. Now, watching any video goes hand in hand with questioning its origin, because it is increasingly challenging for all generations to distinguish between reality and fiction, especially older adults (many trust what they see on networks) and the youngest, who have not yet acquired the knowledge or critical thinking to assess what they see. Moreover, AI-generated videos can be especially dangerous for children, as they often contain empty and meaningless content that is generated without supervision (neither by the creator nor by the platforms on which they are published). Besides not providing any value, they can include inappropriate, violent, or sexual content.

As mentioned above, many of these resources are used for educational purposes, and they can also become detrimental to the academic field. Both students and teachers can fall into the convenience of getting their answers from platforms such as ChatGPT or the first response that appears on Google. Now called “Overview created by AI,” these results have been shown to display erroneous and hallucinatory results. Still, many people may consider these sites as authoritative, without verifying the provenance of the information they provide. That is why, in the current era of disinformation, it is now more essential than ever for educational institutions to prioritize critical thinking, the search for truthful information, and media literacy, so that new generations can navigate a universe where reality and fiction are rapidly merging.

Translation by Daniel Wetta

This article from Observatory of the Institute for the Future of Education may be shared under the terms of the license CC BY-NC-SA 4.0

)

)